The Theory of No Change

Christine Woerlen has authored two studies for Climate-Eval Community of Practice (Guidelines to Climate Change Mitigation and Meta Evaluations of Climate Change Mitigation Evaluation). The original version of this article appeared in the of the European Evaluation Society (EES).

Evaluating projects, programmes and policies is typically based on information supplied from within the narrow domain of the intervention ? implementers, designers, recipients, affected stakeholders. Often, the program logic is taken from project design documents, and not questioned further. If a project fails the programme logic might be discarded as faulty without further ado. But without going beyond the assumptions and logic that underlie the evaluandum it is often hard to understand why an intervention might not have delivered the intended results. In such

cases learning from the evaluation is necessarily limited.

A meta-evaluation of climate change mitigation evaluations supported by a community of practice hosted by the Evaluation Office of the Global Environment Facility (GEFEO) identified a series of factors underlying failures. This led to a better understanding of "why" interventions failed and directed attention towards policies that might have

A meta-evaluation of climate change mitigation evaluations supported by a community of practice hosted by the Evaluation Office of the Global Environment Facility (GEFEO) identified a series of factors underlying failures. This led to a better understanding of "why" interventions failed and directed attention towards policies that might have

more impact on climate change trends. Rather than a classical theory of change, which postulates that certain causal linkages and assumptions make an intervention "work" a theory of no change puts forward hypotheses regarding why certain causal linkages are in fact broken or why interventions cannot (yet) work in identified circumstances.

The meta-evaluation led to the formulation of a framework that identified explicit barriers to change - in this case intended market changes ? that had prevented up-scaling of desired practices - i.e. energy efficiency measures. A case study of ten evaluations on energy efficiency projects, policies and programs in Thailand was undertaken to test

whether twenty identified barriers helped explain market dynamics. A second case study in Poland was used for further testing. The case studies helped reduce the "Theory of no change" (or TONC) framework to seventeen crucial barriers.

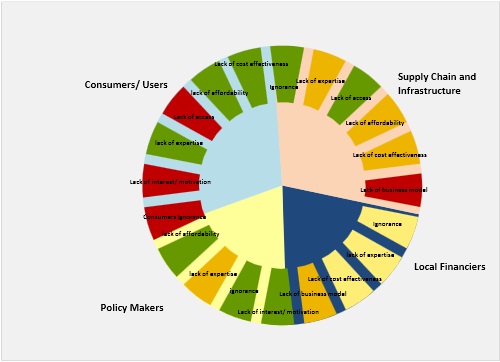

The idea behind the barrier framework is that it is not always the behavior of the target group of an intervention that makes an intervention fail. Specifically the analysis identified ? for the case of energy efficiency - four (macro-) groups of actors whose behaviors also shape outcomes, i.e. significant barriers to change are found among users of energy, suppliers of energy-using equipment, financiers and policy makers. In turn, each of these actors faces one or more of six generic types of barriers: lack of motivation, lack of awareness, lack of access to the "better" technology, lack of technical expertise, lack of affordability, lack of cost effectiveness. In some cases, they may be part of the intervention and the original program already. In most cases, where projects failed, these were actually not part of the original considerations but constituted "contextual challenges" to project success. A mapping tool was developed to illustrate the relevance of these barriers to the achievement of intended outcomes (cf. figure). The traffic light scheme informed by evaluation data allows for instant analysis of a given situation. Specifically the barriers that have proven to be effectively limiting change are marked in red. Those that exist but are not decisive bear orange and yellow colors while barrier-free dimensions are displayed in green.

The generic challenge ahead is to integrate "theories of change" with "theories of no change". Following Ray Pawson, we believe that it is useful and possible to generate useful theories through "erealist synthesis" as in this case - by providing a legitimate role for negative proof to help improve our understanding of what is happening compared to what was supposed to happen. In fact, a composite model of why things were supposed to happen combined with a systematic assessment of why they did not happen enables evaluators to look beyond the aspects that programme sponsors have considered already when designing an intervention. It provides the evaluator with a structure for a systematic and empirical analysis of possible root causes of implementation failure thus helping him better understand situation and context of the evaluandum and reducing the risk of wrongly ascribing failure to design faults or of getting lost in a plethora of alternative explanations for the observed outcomes.

The power of this analytical tool has led to further identification of the importance of negative evidence in the work of the GEF Evaluation Office through case studies. This framework for understanding ?no change? is now being developed into a tool that can be used not only for ex post evaluations, but also for diagnostic purposes when new interventions are designed. Currently it is being applied to the identification of new areas of intervention for the German Ministry's National Climate Initiative. The climate and development evaluation community of practice which developed this stakeholder-based conceptualization of the Theory of No Change has presented it at evaluation events hosted by the International Programme Evaluation Network (IPEN) and the International Development Evaluation Association (IDEAS). Preliminary reactions indicate that this model or at least the fundamental thinking behind it might be useful in many sectors and evaluation fields. The model itself or the basic approach can potentially be helpful in such domains as health care or education where it is clear that changed behavior by various actors is in the interest of all either because it is more cost effective than the current behavior, or because it bears additional and originally unintended long-term benefits. In such cases, there were non-obvious barriers to desired behaviors either by the target group of an intervention or by other actors that operate at levels that were disregarded at the design stage. If you are looking for more information on this topic you may wish to join Climate-Eval.org. The studies and a webinar can be downloaded at (Meta-Evaluation of Climate Mitigation Evaluations), to explore the issue further please join the climate-eval community.